You can store data as is without having to first structure it based on questions you might have in the future. With a data lake, you can store your structured and unstructured data in one centralized repository and at any scale. The data warehouse software works across multiple types of storage hardware-such as solid state drives (SSDs), hard drives, and other cloud storage-to optimize your data processing. Within each database, you can organize your data into tables and columns that describe the data types in the table.

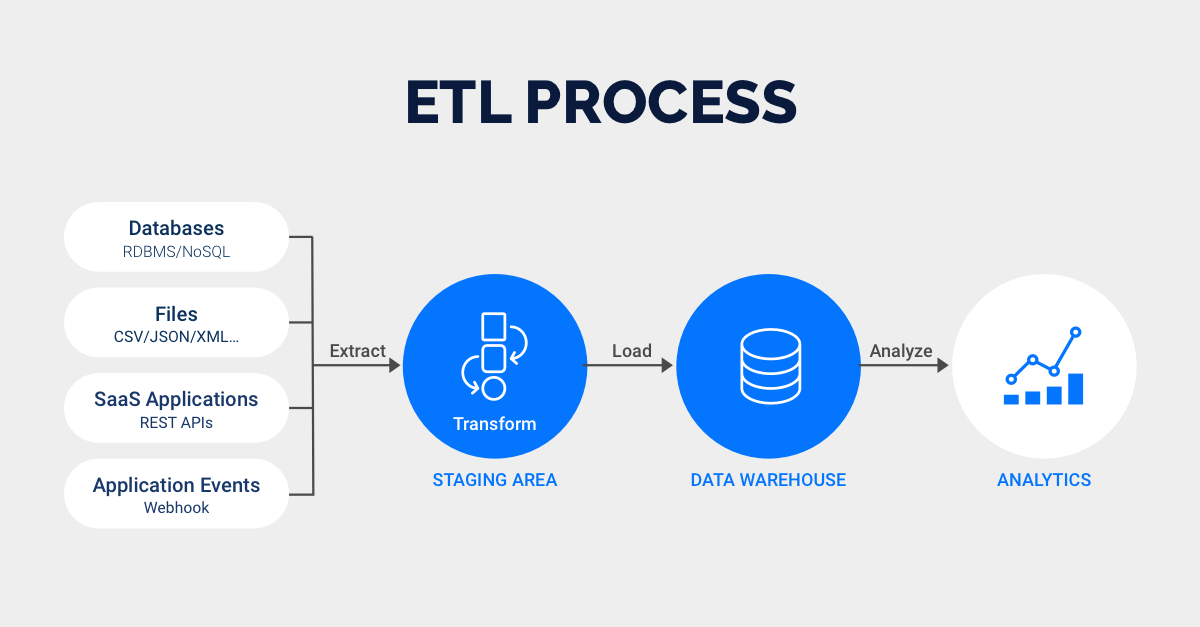

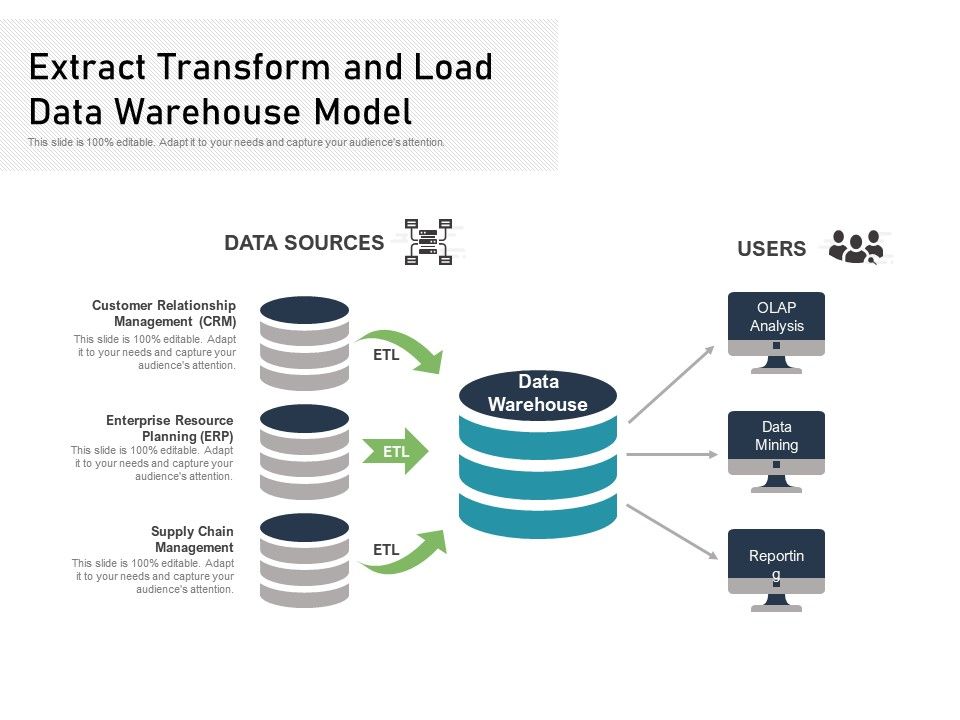

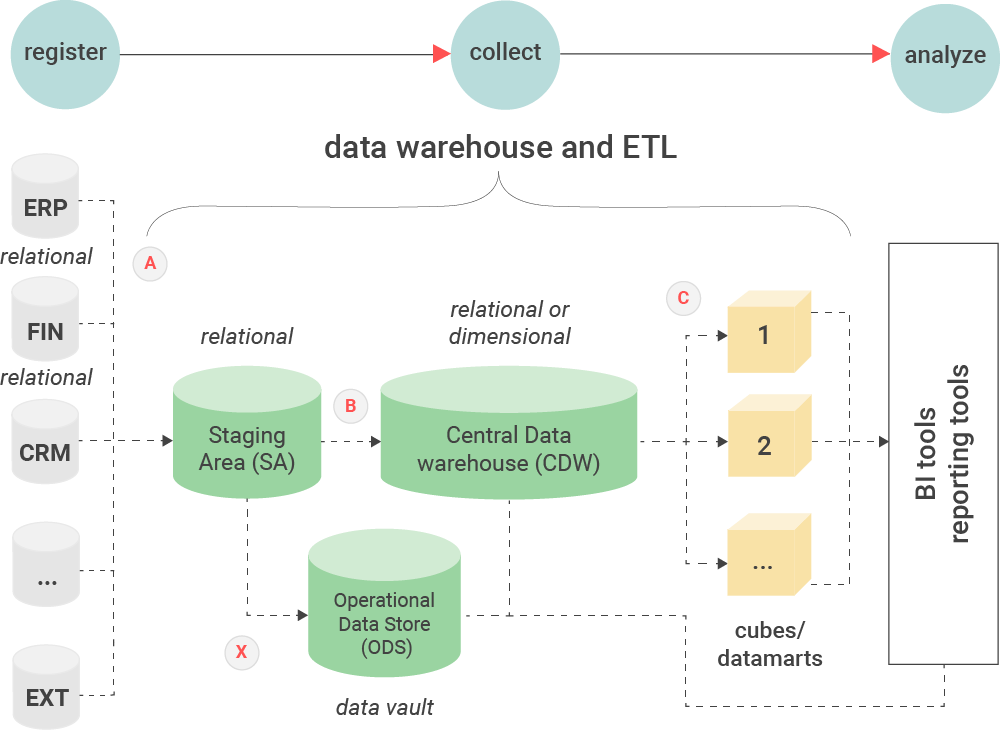

Data warehousesĪ data warehouse is a central repository that can store multiple databases. They can convert data from legacy data formats to modern data formats. ETL tools have also become more sophisticated and can work with modern data sinks. Such data sinks can receive data from multiple sources and have underlying hardware resources that can scale over time.

Cloud technology emerged to create vast databases (also called data sinks). Modern ETLĪs ETL technology evolved, both data types and data sources increased exponentially. Analysts could use queries to identify relationships between the tables, in addition to patterns and trends. To overcome this issue, ETL tools automatically converted this transactional data into relational data with interconnected tables. Given the data duplication, it became cumbersome to analyze the most popular items or purchase trends in that year. Over the year, it contained a long list of transactions with repeat entries for the same customer who purchased multiple items during the year. For example, in an ecommerce system, the transactional database stored the purchased item, customer details, and order details in one transaction. You can think of it as a row in a spreadsheet. Raw data was typically stored in transactional databases that supported many read and write requests but did not lend well to analytics. Early ETL tools attempted to convert data from transactional data formats to relational data formats for analysis. As a result, data engineers can spend more time innovating and less time managing tedious tasks like moving and formatting data.Įxtract, transform, and load (ETL) originated with the emergence of relational databases that stored data in the form of tables for analysis. ETL tools automate the data migration process, and you can set them up to integrate data changes periodically or even at runtime. Task automationĮTL automates repeatable data processing tasks for efficient analysis. You can integrate ETL tools with data quality tools to profile, audit, and clean data, ensuring that the data is trustworthy. Accurate data analysisĮTL gives more accurate data analysis to meet compliance and regulatory standards. This makes it easier to analyze, visualize, and make sense of large datasets. The data integration process improves the data quality and saves the time required to move, categorize, or standardize data.

ETL combines databases and various forms of data into a single, unified view. Managing multiple datasets demands time and coordination and can result in inefficiencies and delays. Consolidated data viewĮTL provides a consolidated view of data for in-depth analysis and reporting. You can view older datasets alongside more recent information, which gives you a long-term view of data. An enterprise can combine legacy data with data from new platforms and applications. Historical contextĮTL gives deep historical context to the organization’s data. Extract, transform, and load (ETL) improves business intelligence and analytics by making the process more reliable, accurate, detailed, and efficient.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed